Chapter One: Gut Feeling

We had this debate just earlier this week, between David Mills, Amy Alexander, and myself.

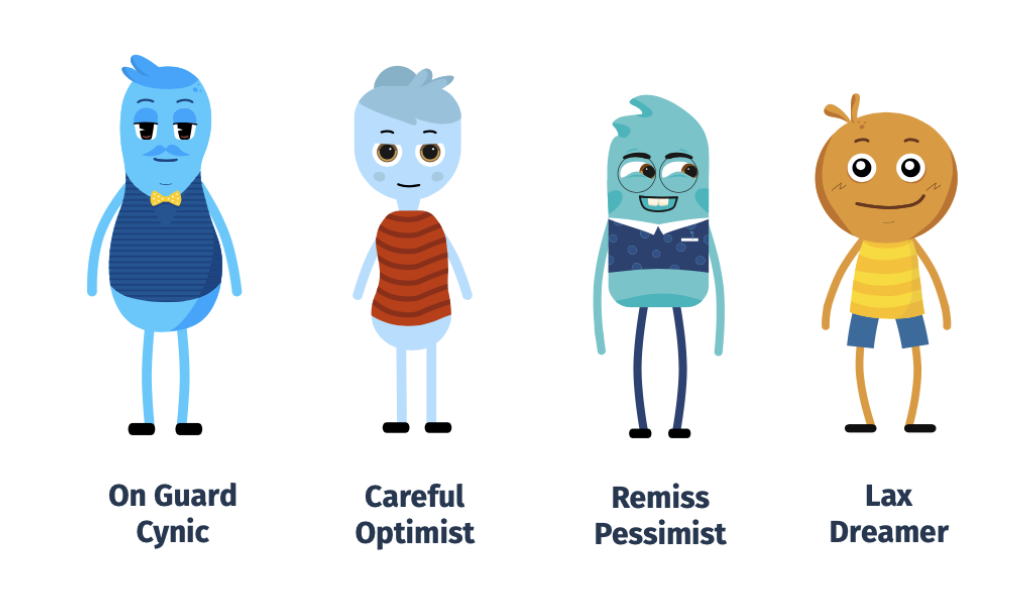

To frame our story, let me introduce our main characters:

Growth Officer Dave = Team A | Web Strategist Jen = Team B | Creative Director Amy = Team C (undecided, that is)

Collectively we had built a killer landing page for one of our workshops. The title was claimed, the graphic CTAs were created, and a pillar page describing the workshop was published.

But Team B (that’s me) wasn’t completely satisfied: “It’s the name of the workshop… It sounds too similar to another workshop - by another company - that we have all heard of. I don’t like it.”

Team B and Team A debated back and forth about the pros and cons, with Team C interjecting different ideas and opinions along the way.

“In all of my research I think this is the most well-thought-out approach, being that everyone already knows the terms and it has really high SEO value,” claimed Team A.

“I see both sides of the argument,” said Team C, so eloquent in her words. “Let me offer another opinion. But keep in mind, all of our assets will need to be re-created if we change the name now.”

It went back and forth like this for a short while, but it did not take us long to realize we were not going to agree. Because really, it didn’t come down to a science at all, but deep-down Team A and Team B both had gut feelings and assumptions that needed to be tested.

“I don’t have a clear argument,” I said. “I really just have this … gut feeling.”

You may not know this - but gut feelings are actually a very important part of marketing strategies. Not to say that evidence and best practices are also very important. But since we are human, our human instincts play a role in our ability to reach other humans. Makes sense, right? Pay attention to them, follow them, and see where the rabbit hole leads you. Here at Story we have several huge marketing success stories that were born out of instinct and gut feelings.

So in the end, we were still all on different teams. And it was time for a little friendly game of A/B Testing.

Chapter Two: A/B Testing

We knew we needed to test our theories. We would call the new workshop by two names, send out an email to our entire marketing list, and use an A/B Call to Action test. Whichever name got the most conversions would win.

“If you win, I’ll buy you a coffee,” Team A was feeling pretty generous. So, I upped the ante: “If you win I’ll buy you tickets to see the new Star Wars movie.”

The bets were set. Now, to complete the test.

Luckily, we at Story Collaborative use an automation software called Hubspot (insert shameless self-promotion about our Hubspot Gold partnership), which makes it extremely easy to A/B test without much effort at all.

Chapter Three: Setting up the Experiment

Remember science class? No, not when your teacher made you cut open a dead frog and almost pass out from the formaldehyde, or when your dad who also happens to be the science teacher bets a student to eat fish eyes in exchange for extra credit…

Yeah, no.

I am talking about the rules around experimentation.

For example, when A/B testing it is important to remember that you are only testing ONE element at a time. For our landing pages - the artwork, language, and layout are all going to stay exactly the same. The only thing that is going to be tested is the name of the workshop.

For our A/B Test we are going to need:

- Matching landing pages with different titles

- Matching CTAs with different titles

- One email with both CTAs used as an A/B test (using Hubspot software)

There are two important lessons that apply to any digital marketers’ job:

1: Set a benchmark (this is the "control"):

We really don't need a control group in this situation, but we do need to have an idea of how our emails, CTAs and landing pages normally do. If they fall way below the benchmark, then maybe something is wrong with our premise.

Examples of things you can benchmark:

- Email open rate

- Click-through rate

- Time on page/site

- Form submissions

- Ad impressions or clicks

Another way of creating a "Control" group is by learning your industry standards. In the cosmetic industry, of example, there is an average email open rate of 30% (ok ok I just made that up).

In our test, we are going to benchmark our average email click-through rate, as our control. But we are also going to measure the industry-standard to cold leads.

2: Set a hypothesis

If you remember, a Hypothesis is an If/Then statement. If you read this blog post, then you will have new knowledge of my dad also being my science teacher.

Ok, that's not exactly a good hypothesis statement. But you get the idea...

If: I exercise

Then: I will get stronger

Since this is an A/B test, our hypotheses will be tied to our click-through rate.

If we use a good workshop title, then the conversion rate will be equal to or greater than our average click-through rate.

Chapter Four: Resolution only happens after Conflict

As you can see, there are multiple measurements that we could take here - click-through rate versus on-page conversion, and our average versus the industry average. Marketing is not as black and white as science - it takes into account many variables as well as our target market psychology.

Marketing takes into account good ideas matched with studies and analytics and allows for rules to be made based on gut feeling.

No Resolution without a Climax

As we prepare to run our experiment, it is important to remember that in every story you have ever read, and every movie you have ever watched - there is no resolution to a problem without first reaching a sometimes very stressful climax, the conflict that results from a problem.

I will leave you with a quote from the opposing team:

“Marketing Strategies are only based on hypothesis until the experiment is over.”

- David Mills, Growth Officer and Story Ecologist (Team A)

For those who followed our test, the graphic below and the important service is represents demonstrate the outcome of our test --My team lost:(